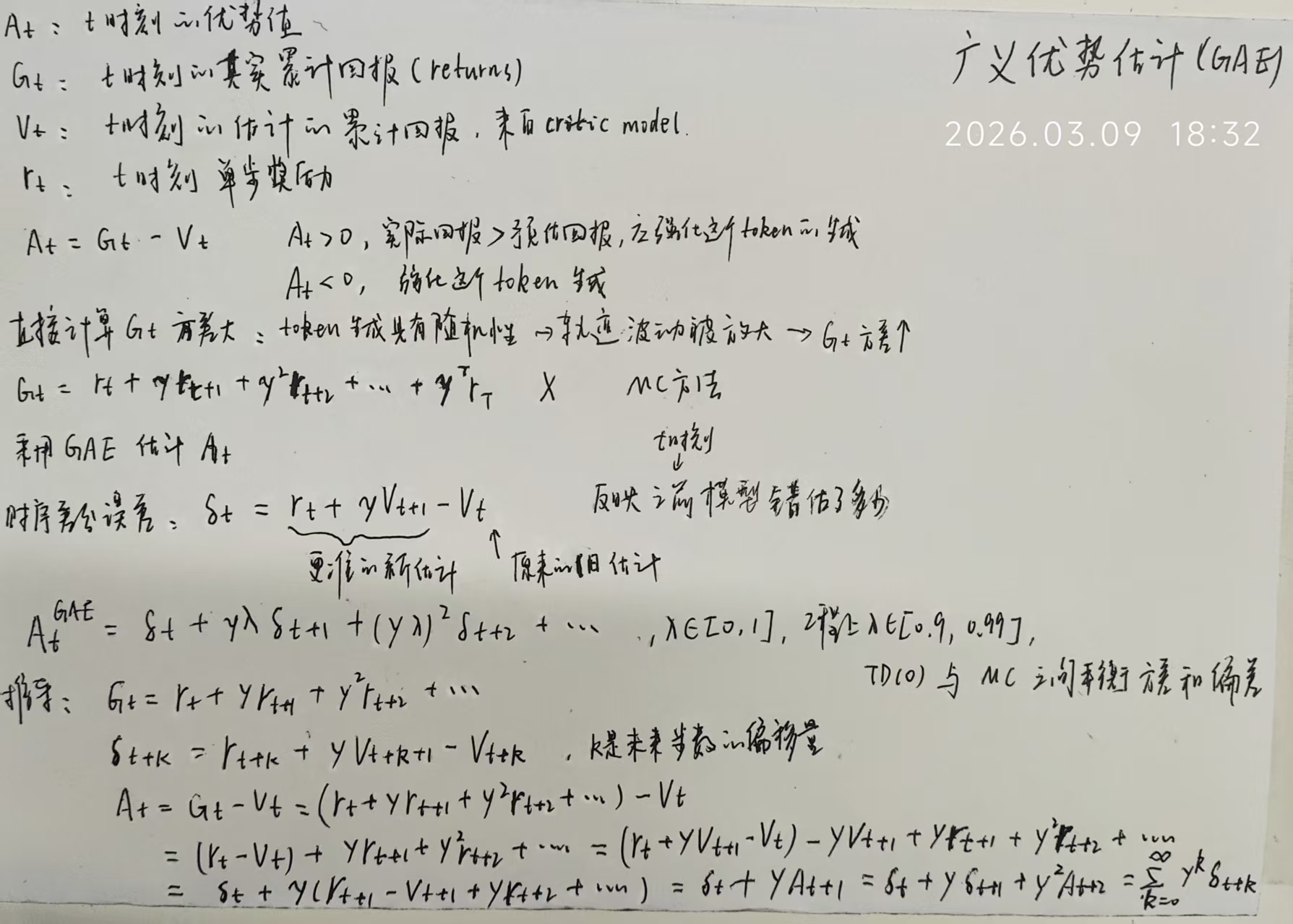

强化学习中广义优势估计(GAE, Generalized Advantage Estimation)

的核心逻辑实现: 1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43def compute_gae_advantage_return(

token_level_rewards: torch.Tensor,

values: torch.Tensor,

response_mask: torch.Tensor,

gamma: torch.Tensor,

lam: torch.Tensor,

):

"""Adapted from https://github.com/huggingface/trl/blob/main/trl/trainer/ppo_trainer.py

Args:

token_level_rewards: `(torch.Tensor)`

shape: (bs, response_length)

values: `(torch.Tensor)`

shape: (bs, response_length)

response_mask: `(torch.Tensor)`

shape: (bs, response_length). [EOS] mask. The token after [EOS] have mask zero.

gamma: `(float)`

discounted factor used in RL

lam: `(float)`

lambda value when computing Generalized Advantage Estimation (https://arxiv.org/abs/1506.02438)

Returns:

advantages: `(torch.Tensor)`

shape: (bs, response_length)

Returns: `(torch.Tensor)`

shape: (bs, response_length)

"""

with torch.no_grad():

lastgaelam = 0

advantages_reversed = []

gen_len = token_level_rewards.shape[-1]

for t in reversed(range(gen_len)):

nextvalues = values[:, t + 1] if t < gen_len - 1 else 0.0

delta = token_level_rewards[:, t] + gamma * nextvalues - values[:, t]

lastgaelam = delta + gamma * lam * lastgaelam

advantages_reversed.append(lastgaelam)

advantages = torch.stack(advantages_reversed[::-1], dim=1)

returns = advantages + values

advantages = verl_F.masked_whiten(advantages, response_mask)

return advantages, returns

推导过程: